Sam Altman, CEO of OpenAI, speaks at the meeting of the World Economic Forum in Davos, Switzerland. (Denis Balibouse/Reuters)

AI shouldn’t make any decisions

OpenAI Quietly Deletes Ban on Using ChatGPT for “Military and Warfare”

Good job, Sam Altman. Saying one thing and doing the opposite. There are already enough conspiracy theories about Davos running the world, and the creepy eye-scanning orb guy isn’t helping.

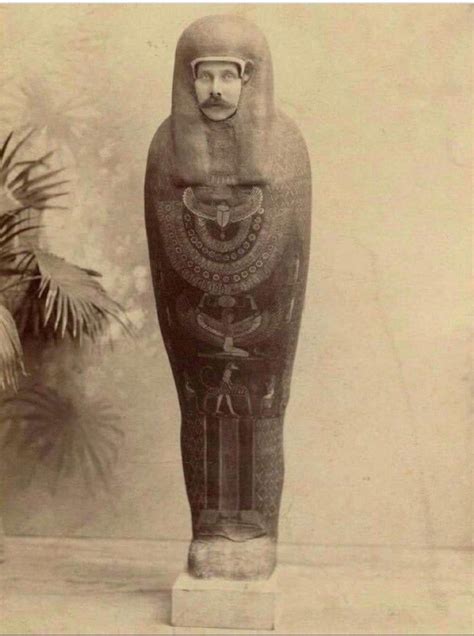

Worldcoin, founded by US tech entrepreneur Sam Altman, offers free crypto tokens to people who agree to have their eyeballs scanned.

What a perfect sentence to sum up 2023 with.

So just like shitty biased algorithms shouldn’t be making life changing decisions on folks’ employability, loan approvals, which areas get more/tougher policing, etc. I like stating obvious things, too. A robot pulling the trigger isn’t the only “life-or-death” choice that will be (is!) automated.

Ummm…no fucking shit. Who was thinking that was a good idea?

probably about half of the executives this guy talks to

AI will be used to increase shareholder dividends. If your company just happens to involve healthcare, warfare, etc and AI makes decisions to maximize profit then youre just collateral damage with no human to blame. Sorry your husband died of cancer, the computer did it.

Yup, my job sent us to an AI/ML training program from a top cloud computing provider, and there were a few hospital execs there too.

They were absolutely giddy about being able to use it to deny unprofitable medical care. It was disgusting.

When there’s no human to blame because the robot made the decision the ceo should carry all the blame