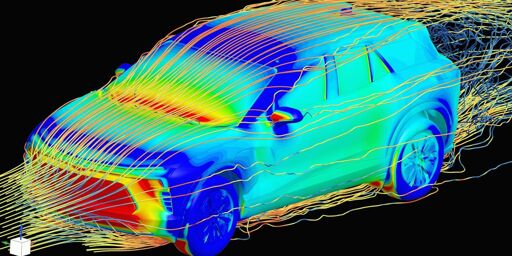

…Previously, a creative design engineer would develop a 3D model of a new car concept. This model would be sent to aerodynamics specialists, who would run physics simulations to determine the coefficient of drag of the proposed car—an important metric for energy efficiency of the vehicle. This simulation phase would take about two weeks, and the aerodynamics engineer would then report the drag coefficient back to the creative designer, possibly with suggested modifications.

Now, GM has trained an in-house large physics model on those simulation results. The AI takes in a 3D car model and outputs a coefficient of drag in a matter of minutes. “We have experts in the aerodynamics and the creative studio now who can sit together and iterate instantly to make decisions [about] our future products,” says Rene Strauss, director of virtual integration engineering at GM…

“What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer says. “Before, these engineers would spend a lot of time on low added value tasks, whereas now these manual tasks from the past can be automated using these AI models, and the engineers can focus on taking the design decisions at the end of the day. We still need engineers more than ever.”

accuracy is not a huge concern at the design stage

Then I doubt they are running the mentioned most accurate, two-week-long physics solvers at this stage either. You only do that when you need accuracy. A quick simulation doesn’t take long.

I’m failing to see why the creative writing machine is better than a simulation set to ‘rough’.

I’m failing to see why the creative writing machine is better than a simulation set to ‘rough’.

The problem is that you saw AI and thought LLM.

Machine Learning is a big field, AI/Neural Networks are a subset of that field and LLMs are only a single application of a specific type of LLM (Transformer model) to a specific task (next token prediction).

The only reason that LLMs and Image generation models are the most visible is that training neural network requires a large amount of data and the largest repository of public data, the Internet, is primarily text and images. So, text and image models were the first large models to be trained.

The most exciting and potentially impactful uses of AI are not LLMs. Things like protein folding and robotics will have more of an impact on the world than chatbots.

In this case, generating fast approximations for physical modeling can save a ton of compute time for engineering work.

I work for a company that’s been using machine learning software for slightly over a decade.

I once worked tech support for people who ran physics simulations. They said that sometimes they had to rerun the simulations if they didn’t come back accurately. I asked how they could tell if they were accurate.

They said it was based on whether it felt right. I still hate that response, but I guess I can’t come up with a better idea, other than doing whatever they’re testing in real life.

Those kinds of simulations are inherently chaotic, tiny changes to the initial conditions can have wildly different outcomes sometimes to the point of being nonsensical. Also, since they’re simulating a limited volume the boundary conditions can cause weird artifacts in some cases.

If you run a simulation of air over an aircraft wing and the end result is a mess of turbulence instead of smooth flow then you can assume that simulation was acting weird and not that your wing design is suddenly breaking the rule of physics. When the simulation breaks it usually does so in ways that are obvious due to previous testing with physical models.

That’s … Basically what they said.

No, they said “it felt right” which is incomprehensible to anyone that doesn’t have any experience with how a CFD results generally looks like.

I don’t have any experience with “CFD results” but the two sound similar to me. The ellipsis might have made it seem sarcastic, but that wasn’t my intent.

CFD = Computational Fluid Dynamics.

It is kind of what they said, you’re right. I was more pointing how how it could be that they could ‘sense the vibes’ of a CFD result to determine if it is accurate or if the model decided to do something weird. Since it’s a chaotic process and also an artificial one, the starting conditions can yield results that are impossible/not based on reality.

If you look at enough of them you start to notice the kinds of things that go wrong. They would also have a pretty good idea about how their design should perform and if the simulation shows different they’d first want to troubleshoot the simulation before attempting to re-design whatever system they’re creating.

Maybe you’re my old co-worker.

Regardless, I appreciate the detail! Thank you for elaborating.

I don’t know if this is the full explanation, but the article does touch on how the LPM can be tweaked to match physical tests:

The trick is to incorporate experimental measurements to fine-tune the model. If a physics simulation doesn’t agree exactly with experimental data, it is often difficult to figure out why and tweak the model until they agree. With AI, incorporating a few experimental examples into the training process is a lot more straightforward, and it’s not necessary to understand where exactly the model went wrong.

Well, who do I trust less? GM or AI?

And large physics models are simulations, aren’t they?

And large physics models are simulations, aren’t they?

No. Not by any definition of “simulation” I know.

Right? Like isn’t this just a different algorithm at the end of the day? This just has an organic (as in a part of the design) way to skip parts of the equation with pressumed constants.

And again no “AI” is used, because it does not exist and is not needed for statistical approximations.

Props to this publication for at least leaving the grifter bullshit out of the title. That’s a clue that the technology might actually be useful.

“AI” <<<<<<< large physics models

This is definitely AI, but AI is such a vaguely defined term that it’s basically meaningless. Too many people these days mistake it for meaning “a computer that can think like a human” even though it encompasses everything from LLMs to chess playing algorithms to something like Minecraft zombie pathfinding.

All machine learning is and has always been part of the artificial intelligence field. They’re doing AI, whether that happens to be a trending term or not.

Yah, I have some vague experience in the space and, without getting into things covered by NDAs, I guess I can say…

First, The popular media talks about the classic style of physics solvers as these magical black boxes but my experience is that they are sufficiently unreliable that I would never trust my life solely to the answers of a solver. They do provide very valuable feedback for refining a design without an endless hardware-rich cycle of destructive testing. Thus, I think that a large physics model is probably going to be the same sort of useful tool.

Second, while the CAE engineers can be very very protective over the time they spend on the two week cycle the article talks about, it’s fucking drudge work and a waste of a good mind. At the same time, the article does not really talk about some of the nitty gritty details. Aerodynamics is a great place to start because there’s less setup but the coefficient of drag is only one problem that needs to be considered.

Third, the good engineers can “see” things intuitively because things do operate with a pattern. Vorticies from protruding features… stress fractures from square holes in a beam… etc. This does feel like an area where spicy autocorrect can spicy autocorrect you to a useful answer.

Finally, cycle time for real world engineers is just like the cycle time for software engineers. Nobody wants to go back to the world where programmers submitted a deck of cards and got the printout back a week later.

The only real risk here is that somebody gets high on their own supply and decides that a large physics model is actually predictive and we don’t need the same set of actual physical tests that validate the models.

I don’t know what specific technology they’re using, but remember that not all AL/ML implementations are LLMs.

Yeah, I was referring to spicy autocorrect in the more general sense of something that uses a faster statistical model to replace a slower theoretically derived exhaustive calculation.

I was so happy reading this thread. I got the sense that people were actually reading the article. Thank you, all. I am glad to see this kind of user commentary.

.ps This is my first post on lemmy!

I mean, they’re using AI for “polling” now so, why not?