Found it first here - https://mastodon.social/@BonehouseWasps/111692479718694120

Not sure if this is the right community to discuss here in Lemmy?

Not as bad as the AI-generated articles showing up in search results. Some websites I get driven to make absolutely no sense, despite a lot of words being written about all kinds of topics.

I’m looking forward to the day when “certified human content” is a thing, and that’s all search engines allow you to see.

if you look up anything rooting or custom related, those sites seem to be half of what comes up

Yeah, a lot of repair sites come up with pages that have just hundreds of Q&A’s, but often times they don’t make sense or aren’t even related to the topic! Once you realize how much time was wasted on these garbage sites, you don’t even feel motivated to keep looking for answers.

The winning search engine will link to useful and relevant content, whether they are ai generated or not.

It’s more likely that the winning search engine will be the one that generates the most ad revenue via clicks.

Eventually all content will just be AI generated on the fly. No need to keep dumb content on precious storage that could be used to increase model size.

Eventually all comments will be AI-generated too, carefully crafted to ensure humans follow a paid narrative.

They’ll just make certification so expensive only the wealthy will qualify.

You’ll never hear another perspective again.

Or, you know, we go back to the time when the news media had real gatekeepers and not just any random jackass could churn out some bullshit copy and broadcast it to the world, let alone have it get published by their local paper.

It’s nice that the Internet has democratized access to a national or even global audience, but let’s not pretend for a moment that it hasn’t caused a ton of problems in the process such that now many people have no idea of what to believe while others believe whatever they want.

It’s still pretty easy to tell the difference. You have to have a pretty low level of media literacy to not be able to easily spot it. Unfortunately we already know that most people don’t have a clue when it comes to mass media, and even if they did, we also know that people tend to believe whatever reinforces their priors.

AI generation sites about to become Pinterest 2.0 for clogging up search results.

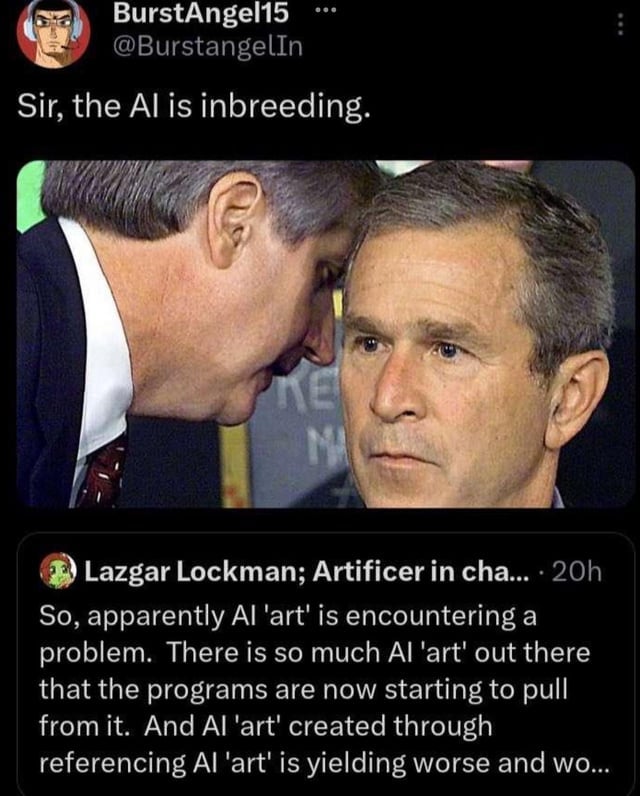

The AI centipede

Its time to start talking about “memetic effluent.” In the same way corporations polluted our physical world, they’re pollution our memetic world. AI spewing garbage data is just the most obvious way, but corporations have been toxifying our memetic space for generations.

This memetic effluent will make sorting through data harder and harder over the years. But the oil and tobacco industries undermined science and democracy for decades with it’s own memetic effluent in order to protect their business for decades. Advertising is it’s own effluent that distorts and destroys language. Jerry Rubin said it in 1970, “How can I tell you ‘I love you’ after hearing ‘cars love shell?’”

While physical effluent destroys our physical environment making living in the world harder, memetics effluent destroys meaning and makes thinking about and comprehending the world harder. Both are the garbage side effects of the perpetuation of capitalism.

This example of poisoning the data well is just too obvious to ignore, but there are so many others.

It’s interesting, because the idea is basically that knowledge and ideas should be constructive, so as not to pollute the sum of human knowledge.

So that raises the question, what is the constructive conclusion to “memetic effluent”? Without one, is the concept itself an example of such effluent?

I don’t think that’s the implication here. Following the metaphor, pottery and arrow points have been waste products for a while. Prior to the industrial revolution, and specifically prior to the chemical revolution, industrial waste streams haven’t been as major of a problem (ignoring cholera for a bit). It’s been the development of selling chemicals for profit and the extensive use of petroleum that’s really caused massive problems threatening humanity as a whole.

The implication then is that people should be responsible for their memes. Corporations are inherently irresponsible because there exit economic incentives to externalize costs, be that environmental or informational. AI garbage as a waste stream would be fine if the data was clearly labeled as such. Unfortunately at least some AI garbage is intended to be deceptive. There exists an economic incentives to produce AI garbage that is hard to distinguish from human output. Since AI garbage can be produced at an industrial scale, there’s a massive waste data stream that’s able to overload the systems we’ve built to parse and organize data.

There are probably a lot more implications here, but “what are we doing with our information world” is something worth thinking about before we make it completely unusable.

This feels like the precursor to the information Apocalypse referenced in the comic Transmetropolitan.

I wonder what would happen in the future as future AI’s get trained with AI generated images that they got from the internet. Would the generated images start to degrade or have somekind of distinct style pop out.

You mean like this?

Yeah something like that. I imagine it would be something like jpeg which degrades as you keep converting over and over. But not sure how would AI generated images would look like.

If you are interested in the subject, this is an interesting medium article.

And should you wish to go directly to the technical source, skipping Medium’s stupidity, here you go.

Why would they not? There’s no way for such a system to know it’s AI generated unless there’s some metadata that makes it obvious. And even if it was, who’s to say the user wouldn’t want to see them in the results?

This is a nothing issue. It’s not like this is being generated in response to a search, it’s something that already existed being returned as a result because there is assembly something that links it to the search.

To put it bluntly: this is kind of like complaining a pencil drawing on a napkin showed up in the results.

There’s no way for such a system to know it’s AI generated unless there’s some metadata that makes it obvious.

I agree with your comment but just want to point out that AI-generated images actually often do contain metadata, usually describing the model and prompt used.

By the time a user has shared them, 99% of the time all superfluous metadata has been stripped, for better or worse.

rip google search results

Internet was already unreliable source of information (for some stuff) without AI, just wait

deleted by creator

This is why Stable diffusion images are digitally watermarked. To avoid training it on itself.

deleted by creator

Well, of course. The search algorithm has no way to know the difference.

AI generated images often contain model and prompt metadata so in fact it could potentially tell the difference. Not that that should necessarily mean the image should be excluded.

Almost every image website deletes EXIF metadata.

Why wouldn’t they?

I noticed this with Bing as well.

Most useful red circle

I’ve been seeing this for about a month.

deleted by creator