What a surprise! A traditional outfit appears statistically significant to a large statistical model and shows more frequently. What a novel finding. I’m flabbergasted! What will be next? CEOs in jacket and tie? Dogs with fur? Why my 512x512 picture of a Inuit in a snowfield doesn’t portrait the subject wearing a bikini? Why can’t meta read my mind? WHY, MARK? WHHHHY?

A traditional outfit

How traditional? How statistically relevant is it? Most Indians i know do not wear turbans at all.

If these stats are trustworthy (and i think they are), the only Indians that wear turbans are Sikhs (1.7%) and Muslims (14.2%). I’d say 15.9% is not statistically significant.

I think you’re looking at it wrong. The prompt is to make an image of someone who is recognizable as Indian. The turban is indicative clothing of that heritage and therefore will cause the subject to be more recognizable as Indian to someone else. The current rate at which Indian people wear turbans isn’t necessarily the correct statistic to look at.

What do you picture when you think, a guy from Texas? Are they wearing a hat? What kind? What percentage of Texans actually wear that specific hat that you might be thinking of?

A surprising number of Texans wear cowboy and trucker hats (both stereotypical). A surprising number of Indians don’t wear turbans since it’s by far a minority.

Woosh

I think the idea is that it’s what makes a person “an Indian” and not something else.

Only a minority of Indians wear turbans, but more Indians than other people wear turbans. So if someone’s wearing a turban, then that person is probably Indian.

I’m not saying that’s true necessarily (though it may be), but that’s how the AI interprets it…or how pictures get tagged.

It’s like with Canadians and maple leaves. Most Canadians aren’t wearing maple leaf stuff, but I wouldn’t be surprised if an AI added maple leaves to an image of “a Canadian”.

deleted by creator

Imagine a German man from Bavaria… You just thought of a man wearing Lederhosen and holding a beer, didn’t you? Would you be surprised if I told you that they usually don’t look like that outside of a festival?

I don’t picture real life people as though they were caricatures.

But AI does, because we feed it caricatures.

Are you literally the second coming of Jesus? Hey everybody! I found a guy who doesn’t see race! I can’t believe it but he doesn’t think anyone is changed in any way by the place that they grew up in or their culture! Everyone is a blank slate to this guy! It’s amazing!

He was imaginary though.

A traditional dress is not a religious dress, it’s a dress used for a long time for it’s usefulness or fashion.

The historical use of the turban is fascinating, spanning for millennia and in a lot of regions and ethnic groups of the world.

I suggest the wiki page for further info, more precise than I have on hand.

An excerpt about the Pagri:

In Rajasthan state of India these turbans, known as Pagri or Safa, is a traditional headwear that is an integral part of the state’s cultural identity.

My point was (but it might be lost in sarcasm) that being the “hat” of Indian kings, nobles and emperors for millennia, we have a lot of drawings and also photos of Indian people with turbans, that most probably these generative models have been trained on.

On a footnote: why should the concept of a traditional dress be offensive? A lot of human groups have one.

Edit these are the words most associated with “Pagri” in english. It’s a matter of data.

A traditional dress is not a religious dress,

Point taken.

On a footnote: why should the concept of a traditional dress be offensive?

Ain’t to me, couldn’t care less. I was just trying to point out that most Indians do not seem to wear turbans (and based my reasoning on the religions dress alone).

Probably they don’t because it’s not context appropriate, as we all do with our dresses. More so if you and them live in a state or city with a different dress code. These things strongly depend on context.

For generative models though, they produce usually the most stereotyped answers possible, with a pinch of randomness, so we shouldn’t be surprised about this phenomenon. They are rewarded by these things.

You don’t think nearly 1/6th is statistically significant? What’s the lower bound on significance as you see things?

To be clear, it’s obviously dumb for their generative system to be overrepresenting turbans like this, although it’s likely to be a bias in the inputs rather than something the system came up with itself, I just think that 5% is generally enough to be considered significant and calling three times that not significant confuses me.

5/6 not wearing them seems more statistically significant

The fact less people of that group actually wear it than do is significant when you want an average sample. When categorizing a collection of images then, naturally, the traditional garments of a group is associated more with that group than any other group: 1/6 is bigger than any other race.

so if there was a country where 1 in 6 people had blue skin you would consider that insignificant because 5 out of 6 didn’t?

For a caricature of the population? Yes, that’s not what the algorithm should be optimising for.

The algorithm is optimizing for results that are rated as precise, not just frequent.

You don’t think nearly 1/6th is statistically significant?

For statistics’ sake? Yes.

For the LLM bias? No.

-

It’s not an LLM, it’s a GAN and it’s inner workings are very different.

-

If that 1/6th has the most positive feedback in recognizability, for the GAN it becomes a high weighted part of the standard. These model’s categorizing flow favors unique features of images.

-

What’s the data that the model is being fed? What percentage of imaging featuring Indian men are tagged as such? What percentage of imaging featuring men wearing Turbans are tagged as Indian Men? Are there any images featuring Pakistan men wearing Turbans? Even if only a minority of Indian feature Turbans, if that’s the only distinction between Indian and Pakistan men in the model data, the model will favor Turbans for Indian Men. That’s just a hypothetical explanation.

Except if they trained it on something that has a large proportion of turban wearers. It is only as good as the data fed to it, so if there was a bias, it’ll show the bias. Yet another reason this really isn’t “AI”

By that logic americans should always be depicted in cowboy hats.

I see you’ve watched anime featuring Americans.

Put in western or Texas and that’s what you get, the west is a huge area even just of America but the word is linked to a lot of movie tropes and stuff so that’s what you get.

This is also only when the language is English, ask in urdu or Bengali and you get totally different results, in fact just use urdu instead of Indian and get less turbans or put in Punjabi and you’ll get more turbuns.

Or just put turban in the negatives if you want

Ask an AI for pictures of Texans and see how many cowboy hats it gives back to you.

Does it help the model to produce images that are indoubtably “american” for it’s raters or for it’s automated rating system? If yes they are statistically significant. Low frequency and systematic rarity can be both significant in a statistical analysis.

It’s a traditional outfit of sikhs, not indians. Pick up a book

Are you sure?

Maybe you are confusing “traditional” with “religious”.

Pick up a dictionary, or even better, an encyclopedia.

Pre-independence, most Indian males had some sort of headgear. E.g. look at any old photos of Bombay

Are we still in “pre independence”?

Oh… didn’t know that traditional meant what you wore yesterday

You’re wrong.

Why would you ask a bot to generate a stereotypical image and then be surprised it generates a stereotypical image. If you give it a simplistic prompt it will come up with a simplistic response.

So the LLM answers what’s relevant according to stereotypes instead of what’s relevant… in reality?

It just means there’s a bias in the data that is probably being amplified during training.

It answers what’s relevant according to its training.

Please remember what the A in AI stands for.

Kinda makes sense though. I’d expect images where it’s actually labelled as “an Indian person” to actually over represent people wearing this kind of clothing. An image of an Indian person doing something mundane in more generic clothing is probably more often than not going to be labelled as “a person doing X” rather than “An Indian person doing X”. Not sure why these authors are so surprised by this

Like most of my work’s processes… Shit goes in, shit comes out…

Yeah I bet if you searched desi it wouldn’t have this problem

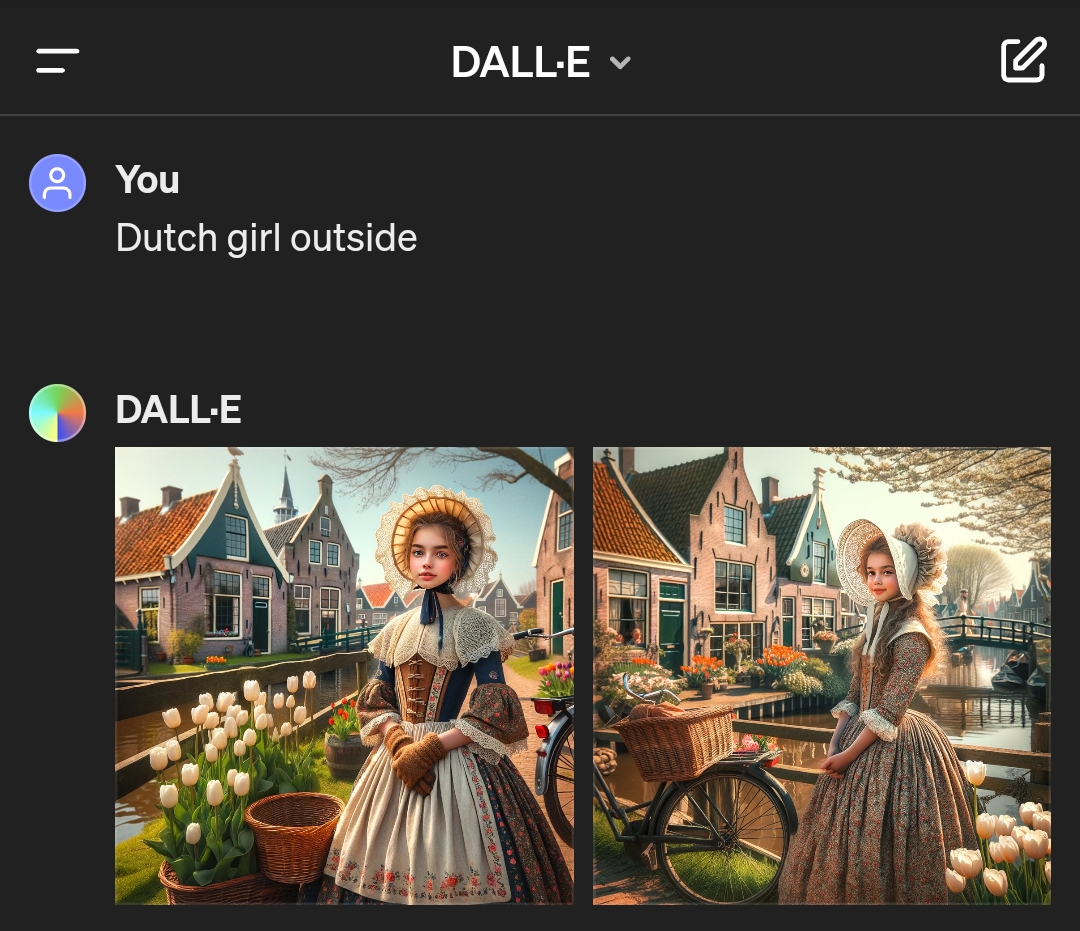

Meanwhile on DALL-E…

I’m just surprised there’s no windmill in either of them. Canals, bikes, tulips… Check check check.

Careful, the next generated image is gonna contain a windmill with clogs for blades

Well, they do run on air…

That looks just like the town Delft

That’s Sikh

Indians can be Sikh, not all indians are Hindu

Yes but the gentlemen in the images are also sikhs

glances at the current policies of the Indian government

Well… for now, anyway.

Sikh pun

Not necessarily. Hindus and Muslims in India also wear them.

Get down with the Sikhness

not me calling in sikh to work

Articles like this kill me because the nudge it’s kinda sorta racist to draw images like the ones they show which look exactly like the cover of half the bollywood movies ever made.

Yes, if you want to get a certain type of person in your image you need to choose descriptive words, imagine gong to an artist snd saying ‘I need s picture and almost nothing matters beside the fact the look indian’ unless they’re bad at their job they’ll give you a bollywood movie cover with a guy from rajistan in a turbin - just like their official tourist website does

Ask for an business man in delhi or an urdu shop keeper with an Elvis quiff if that’s what you want.

the ones they show which look exactly like the cover of half the bollywood movies ever made.

Almost certainly how they’re building up the data. But that’s more a consequence of tagging. Same reason you’ll get Marvel’s Iron Man when you ask an AI generator for “Draw me an iron man”. Not as though there’s a shortage of metallic-looking people in commercial media, but by keyword (and thanks to aggressive trademark enforcement) those terms are going to pull back a superabundance of a single common image.

imagine gong to an artist snd saying ‘I need s picture and almost nothing matters beside the fact the look indian’

I mean, the first thing that pops into my head is Mahatma Gandhi, and he wasn’t typically in a turbine. But he’s going to be tagged as “Gandhi” not “Indian”. You’re also very unlikely to get a young Gandhi, as there are far more pictures of him later in life.

Ask for an business man in delhi or an urdu shop keeper with an Elvis quiff if that’s what you want.

I remember when Google got into a whole bunch of trouble by deliberately engineering their prompts to be race blind. And, consequently, you could ask for “Picture of the Founding Fathers” or “Picture of Vikings” and get a variety of skin tones back.

So I don’t think this is foolproof either. Its more just how the engine generating the image is tuned. You could very easily get a bunch of English bankers when querying for “Business man in delhi”, depending on where and how the backlog of images are sources. And urdu shopkeeper will inevitably give you a bunch of convenience stores and open-air stalls in the background of every shot.

There are a lot of men in India who wear a turban, but the ratio is not nearly as high as Meta AI’s tool would suggest. In India’s capital, Delhi, you would see one in 15 men wearing a turban at most.

Probably because most Sikhs are from the Punjab region?

Overfitting

It happens

its the “skin cancer is where there’s a ruler” phenomena.

I don’t get it.

I’m guessing this relates to training data. Most training data that contains skin cancer is probably coming from medical sources and would have a ruler measuring the size of the melanoma, etc. So if you ask it to generate an image it’s almost always going to contain a ruler. Depending on the training data I could see generating the opposite as well, ask for a ruler and it includes skin cancer.

Ooooohhhh nice explanation!

This isn’t what I call news.

But is it what you call technology?

I call everything technology

I’m not sure how AI could be possibility racist. (Image is of a supposed Native American but my point still stands)

the AI itself can’t be racist but it will propagate biases contained in its training data

It definitely can be racist it just can’t be held responsible as it just regurgitates.

No, racism requires intent, AI aren’t (yet) capable of intent.

It definitely can be racist

that requires reasoning, no AI that currently exist can do that

What point is that?

Stereotypes are everywhere

Turbans are cool and distinct.

Would they be equally surprised to see a majority of subjects in baggy jeans with chain wallets if they prompted it to generate an image of a teen in the early 2000’s? 🤨

This is the best summary I could come up with:

The latest culprit in this area is Meta’s AI chatbot, which, for some reason, really wants to add turbans to any image of an Indian man.

We tried prompts with different professions and settings, including an architect, a politician, a badminton player, an archer, a writer, a painter, a doctor, a teacher, a balloon seller, and a sculptor.

For instance, it constantly generated an image of an old-school Indian house with vibrant colors, wooden columns, and styled roofs.

In the gallery bellow, we have included images with content creator on a beach, a hill, mountain, a zoo, a restaurant, and a shoe store.

In response to questions TechCrunch sent to Meta about training data an biases, the company said it is working on making its generative AI tech better, but didn’t provide much detail about the process.

If you have found AI models generating unusual or biased output, you can reach out to me at im@ivanmehta.com by email and through this link on Signal.

The original article contains 956 words, the summary contains 164 words. Saved 83%. I’m a bot and I’m open source!