So that kind of means that the high-end AAA PC market will crash in the next years, right? No new GPUs, production stop for existing GPUs and rising prices for GPU & RAM in combination with inflation and a bad economy ensure that many people can’t afford a gaming computer. And that a lot of those younger gamers can’t afford to start this hobby.

And that means a shrinking audience for games, which need all this GPU power. If you’re an AAA publisher, it kind of looks crazy to invest multiple millions into a game that you can’t be sure that your audience will be able to afford to play

All games will be streamed, with a subscription

Retroarch disagrees. I don’t need your newfangled enshittified slop. I have megaman X and wine.

Shhh! Don’t give them any ideas!

Way too late. This has been a talking point for a while. The AI bubble will burst but that doesn‘t mean they‘ll just return to their roots. Those new data centers need a use case and they need a good reason to keep building more.

I guess the silver lining is that this plan B won‘t work out either so we‘ll have to see. But until then we better take good care of our current hardware. It will probably have to last a good while longer.

Cloud computing is the real endgame. The big tech bros want to price consumers out of the PC hardware market (GPUs, RAM, NVMe, etc.) so they can offer a cloud solution via subscription model.

These Chinese are catching up very quickly on GPUs, RAM, etc.

This would be a massive own goal for the existing incumbents.

If the end game is cloud, they will tariff the shit out of these. For exemple i bought slimeVR hard ware, from Europe 280€, from china (190€ + tarif 66€) from China coming from US 240€

Yeah, luckily in Australia, we are getting Chinese stuff without massive tariffs.

I see BYD cars everywhere.

Bozos

Annoying bastard. As if destroying mom-and-pop stores and malls weren’t enough.

I don’t know that they want to, but as economic inequality increases it will happen. Without a middle class, there’s not much of a market for high-end bleeding edge gaming PCs

Don’t worry, you can Stream It From the CLOUD™️ for the low low price of 6x what a GPU would cost you over 5 years.

yea you can wait in a queue to play your unmodded single player experience game

No not really. AMD is still producing cards. Most people play on older or used cards anyways. Maybe like don’t make Crysis level Graphics but other than that one year of less GPU releases won’t kill gaming. Once the AI bubble bursts NVDIA might have lost a lot of edge over AMD in the gaming market and they’ll scramble to get back

I thought AMD also said they were cutting production?

Yes, but think about the money in mobile and console gaming… PC gaming was niche even before that and we represent very small percentage of the overall gaming industry. Nobody gives a fuck about us since some time already. Now they just show it to us in daylight.

That’s a fact. PC gaming vs consoles is to gaming as Linux vs Winblows is to Computers. It has grown over the years, as has Linux desktop, but not enough to make or break an industry.

It’s a weird world we’re living in.

I think it’ll have the opposite effect. Knowing the hardware won’t change in the next year, they don’t have to worry about making it compatible with the new cards. They can focus on building upon what they already have.

And as someone that helped pick out a fantastic PC for my little cousin in dec last year, she paid ~500$ for pretty decent hardware, and so far, she hasn’t found a game in my library her PC can’t handle. Including “wh40k Space Marine 2”

There’s plenty of hardware for younger people that want to get into the hobby. You don’t always need the absolute latest.

Someone is going to make bank by catering to consumers. Will the market accept nvidia back with open arms if/when the ai investments fall through?

Well what do most victims of exploitation and abuse do?

Visiting Stockholm?

I hear it’s nice

Most people are willing to sell their morals. When nvidia comes crawling back it will be like nothing ever happened.

As a Linux gamer, nvidia was already on thin ice.

Also I had past them up on recentish purchases since they only really controlled the highest end of the market which I don’t have the budget for. So honestly I have no intention of welcoming them back unless there is literally no other option. You made your bed.

This is still a pain point for me. I have been looking for a laptop with an AMD GPU for years to use with Linux, but System76, Starlabs, framework, etc insist on only having Nvidia as a discreet option. Or is it that AMD does not have laptop GPUs? Could be.

This is not an advertisement, but have you checked laptopwithlinux (dot) com?

They’re based in Europe and I’m pretty sure they offer laptops with AMD GPUs, if integrated ones count. Not sure if it’s the highest end stuff, might not have VRAM, but there are definitely AMD laptop GPUs

Thanks. I’ll check them out. But I was actually referring to discreet GPUs. I think I’ve never seen an AMD laptop GPU before.

Oh, yeah I don’t know if they carry those. It’s harder to fit one in a laptop case, so I only see them in specialized gaming laptops, and unfortunately most gaming laptops on the market seem to use nvidia.

Maybe them ceasing to produce consumer products will open a niche that others might fill. Time will tell.

Framework used to make laptops with a dedicated AMD GPU, but I haven’t looked in over a year.

Intel, here’s your big chance!

I hope they don’t have the production of non-ai chips then.

I get the disdain for GenAI, but are AI chips really the problem? Maybe they’re more expensive and price people out, but it’s not like they’re built on plagiarism like most generative AI models.

As far as I’m aware, they’re just capable of running highly complex multivariable calculi in parallel, making them more efficient for AI applications, but wouldn’t the same features make them better for more realistic physics and other game mechanics like procedural generation, NPC pathfinding and behaviors, etc.?

I guess it would suck for anyone who doesn’t have the hardware to play a game, but there could always be options to configure in the settings to make it playable, like “don’t use tensor calculus in game physics” or whatever

Far as i know, GPUs are more specialized on vector calculations. Some upscaling/frame generation techniques use AI hardware but that’s it.

Vectors, tensors, and matrices. Not all AI chips are GPUs though, there are currently NPUs in development and the next generation of consumer chips might have them integrated in the CPU.

They’re not good for deterministic equations, like gravity, collisions, or pathfinding, but they could advance other aspects of games like procedural generations, fluid dynamics, NPC dynamic personalities and emergent behaviors.

Some things are still better left to the CPU or GPU, but offloading some tasks to the NPU might allow for more complexity like simulating full weather systems with Parametric Partial Differential Equations

I’m speculating, of course. But playing a game inside a fully-simulated physics engine seems like it could be cool (despite being resource-intensive in current hardware)

Stop buying Nvidia

Easy enough when they’re not selling

Well, they are helping out with that one…

I wish there were more laptops using AMD gpus here in Brazil. You basically can’t find any laptop with an AMD gpu if you search for “gamer laptop” in Brazilian stores.

Gaming laptops are a not really worth it imo. They’re underpowered, overheat easily, and tend to break quickly. That doesn’t even touch on their battery life, even when not under load.I’d recommend getting a steam deck if you really need the portability, but it doesn’t look like they’re available in Brazil :/

I’d recommend getting a steam deck

Nah. I want the 15.6 inch screen screen and the keyboard and touch pad that comes with it. The steam deck is too small and I think it’s a little expensive here

(Valve is not officially selling in Brazil as far as I know).EDIT: didn’t see you already mentioned them not being sold here. I think no third world country has the deck being officially sold for them.

The time to prevent Nvidia from practically gaining a total monopoly on the entire market by stopping buying Nvidia, was 10 years ago, not now.

Now, I’ll consider buying a GPU from you instead if you can make a GPU that satisfies technical needs like Nvidia could, but you cannot.

AMDs the last 10 years have been great. No overheating, like they did 20 years ago.

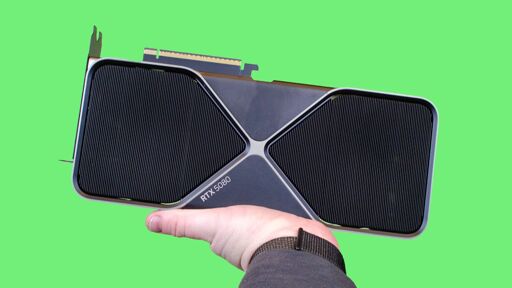

I will when someone makes a GPU that can surpass a 4090. Not even Nvidia themselves can pull that off, so I’m not getting my hopes up.

I’m going to be stuck with this GPU for the next decade the way things are going…not that I’m complaining. It’s a beast of a card, especially for someone like me who could only ever afford bargain bin parts until one day I came into a windfall. (That was a fun 4 years.) I don’t have to worry about games being unoptimized because I can simply brute force them with pure GPU processing power. I was getting 90 FPS in Last of Us on launch. Even Cities Skylines 2 runs smoothly.

Let Nvidia go bankrupt, we won’t miss it

If consumers can’t get new gpus, devs aren’t going to bother spec’ing for them. This’ll probably just result in a stalling of tech you’ll see at home for a few years. Honestly that seems to be happening already. The leaps we’d seen in previous generations seem to be slowing anyway. Maybe this is just a plateau of tech for a while. Good for consumers when they accept that they don’t have to always be on the bleeding edge.

I want devs to write games for £400 Steam Decks. I don’t want them to write games for £3000 GPUs.

There’s realistically no games that won’t run on PS5 level hardware. Every effect that can be done with raytracing can be done a little worse without it.

Maybe some Chinese manufacturer will find a way to fill the gap in the market

i think the latest is that china has managed to create a GPU that’s ~7 years behind. i’m not sure that’s “a GPU from 7 years ago” or “it will take them 7 years, acknowledging that there’s a known path so will take less time”

AFAIK they’ll have to figure out EUV or some other method of lithography at that scale, which they’re trying really hard at but it’s one heck of a difficult thing to do which is why only TSMC currently actually has it working

Their current GPU is roughly equal to a 4060 which isn’t that bad when you consider how far behind they are in terms of time.

Here’s hoping

Careful what you wish for.

🇨🇳 🚣 🇹🇼

Oh I wasn’t wishing for anything, just pointing out the possibility. There are some Chinese companies gearing up to fill the gap in the memory market. GPUs would be much harder, but maybe very profitable.

We’re running straight into a future where consumers’ only option for computers are a cloud solution like MS 365

Pushing constantly towards a subscription economy.

That “economy” is already falling apart. Subscriptions are down, services on “the cloud” are becoming less reliable, piracy is way up again, and major nations and companies are moving to alternatives.

Hell, DDR3 is making a comeback. All that is needed is one manufacturer to start making 15 year old tech again and bam, the house of cards falls.

Hey, I’ve seen this one before.

Last time it was crypto instead of AI, but other than that it’s just the same shit again.

Not really. It’s world economy times bigger now.

What could a GPU cost? $5000?

i really hope nvidia collapses when the AI bubble pops. They’ve been more harm than good for consumers for too long.

It won’t collapse. It’ll lose a huge chunk of its stock price, but it both has other business to fall back on and its chips will still likely be used in whatever the next tech trend is - probably neural network AI or something.

I am not sure. They have other businesses but not sure those other businesses are able to sustain the obligations that nVidia has committed to in this round. They are juggling more money than their pre-AI boom market cap by a wide margin, so if the bubble pops, unclear how big a bag nVidia will be left holding and if the rest of their business can survive it. Guess they might go bankrupt and come out of it eventually to continue business as usual after having financial obligations wiped away…

Also, they have somewhat tarnished their reputation with going all in on the dataenter equipment to, seemingly here, abandoning the consumer market to make more capacity for the datacenters. So if AMD ever had an opportunity to maybe cash in, well, here it might be… Except they also dream of being a big datacenter player, but weaker demand may leave them with leftover capacity…

Never underestimate AMD’s ability to miss good opportunities.

never underestimate AMDs ability to shoot itself in the foot when its not under immediate threat of collapse/bankruptcy.

juggling more money than their pre-AI boom market cap by a wide margin

I’m not sure what you mean by this. Nvidia carries a vanishingly small amount of debt for its size. It has way more liquidity than debt.

Like how nVidia buys equity in a customer and in part promises expensive real product as part of it. So they may have so many billions worth of equity in a customer and might be able to leverage that to fund that production if needed, but if that equity evaporates, then they still are on the hook for the expensive product committment.

So maybe not yet straightforward debt, but a whole lot of expensive balls in the air that could manifest as a committed expense when there’s no actual money to execute…

Just seems like a lot of financial moves that are far from straightforward of a magnitude that could wipe a company out.

As someone not looking to spend a ton of money on new hardware any time soon: good. The longer it takes to release faster hardware, the longer current hardware stays viable. Games aren’t going to get more fun by slightly improving graphics anyway. The tech we have now is good enough.

People don’t just use computers for gaming. If this continues people will struggle to do any meaningful work on their personal computes which is definitely not good. And I’m not talking about browsing facebook but about coding, doing research, editing videos and other useful shit.

Scientific modeling and simulations

If this continues people will struggle to do any meaningful work on their personal computes

Excel users devestated.

Would this mean AMD finally gets the supply demand it Reserves?

Unfortunately AMD is affected by RAM shortage too.

Amd is arguably more affected. Intels CPUs have memory built into it, and intel bought about a years worth of memory.

More and more gamers are seeing Linux as the OS of choice so hopfully that will mean more interest in AMD as well.

They’re AI only now.

This is gonna suck